The AI Horizon: May

Google launches AlphaEvolve, the new Pope warns about AI, and Altman sets the stage for an AI race with China. AI development thunders on. Here are some of the most important stories since last time.

This newsletter is part of the Langsikt (the author's newsletter) newsletter. Sign up to read more from Langsikt here.

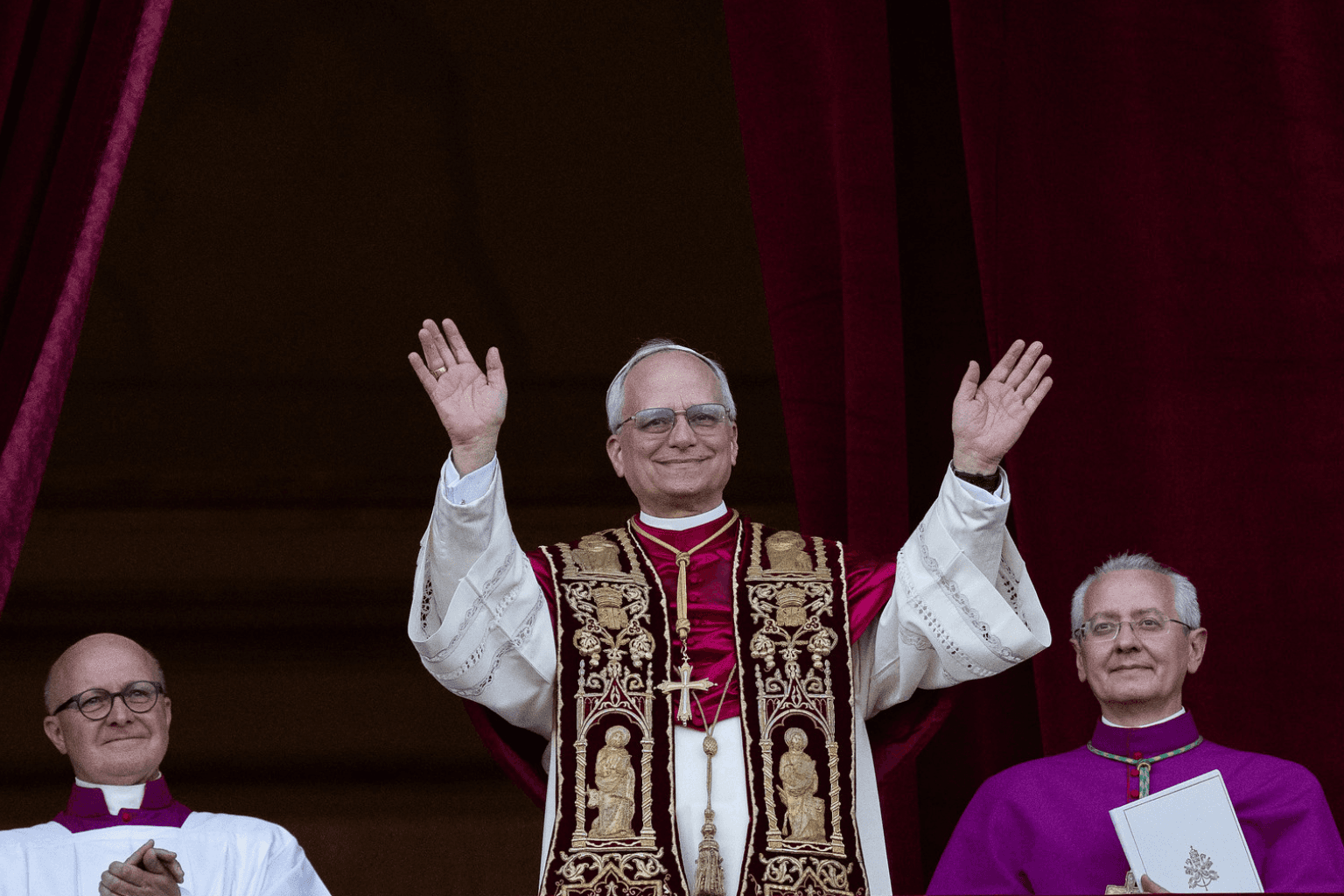

The Pope chooses an AI-inspired name

Most people are aware that we have a new Pope. But perhaps not everyone knows that the Pope chose the name Leo XIV partly because of AI?

In his explanation for the choice of name the new Pope said he was inspired by his predecessor (Leo XIII) who was Pope during the Industrial Revolution. Leo XIV now says we are facing a new "industrial revolution" in AI that threatens "human dignity, justice, and labour."

The new Pope thereby continues the legacy of Pope Francis, who strongly warned that humanity must not lose control of AI.

The New York Times has an interesting article on the Pope's views on AI (read for free here)

Google launches AlphaEvolve. A step closer to self-reinforcing AI?

Google DeepMind launches AlphaEvolve, an AI system that can design better algorithms with minimal human intervention.

AlphaEvolve has already produced impressive results. It has delivered a 0.7% efficiency increase to Google's data centres (that sounds small, but given how vast Google's data centre operations are, every percentage point counts enormously) and contributed to designing better computer chips.

The most striking thing about AlphaEvolve is that it has managed to automatically improve the training time for new language models. This shows that self-reinforcing AI could become a possibility going forward.

DeepMind founder and Nobel laureate Demis Hassabis summarises on X: "Knowledge begets more knowledge, algorithms optimising other algorithms — we are using AlphaEvolve to optimise our AI ecosystem, the flywheels are spinning fast..."

The Senate summons Altman for hearing 2.0

Sam Altman and other tech leaders recently testified at a Senate hearing on AI. Here, the tone was markedly different from under the Biden administration.

In 2023, Altman warned strongly about the negative consequences AI could have on society and argued for the need for government regulation and safety requirements.

Now Altman is singing a different tune, arguing that it would be "catastrophic" to require companies to test that their models are safe before releasing them to the public.

A central message from both senators and witnesses was a desire for "light touch" regulation.

Senator Ted Cruz framed the choice as follows: "In this race, America stands at a crossroads: Will we choose the path that embraces our tradition of entrepreneurial freedom and technological innovation, or will we adopt Europe's command-and-control policies?"

The conclusion from the Senate hearing is that American authorities and leading tech companies agree that AI is a critical race against China that the US must win. That means as little regulation as possible.

OpenAI yields to pressure. The non-profit retains control (for now)

OpenAI makes a U-turn and announces that the non-profit organisation will continue to retain control over the company.

In December 2024, OpenAI put forward plans to remove the non-profit's control over the company, which would have gone against the fundamental purpose for which the company was founded: "To ensure that artificial general intelligence (AGI) benefits all of humanity."

But now OpenAI is reversing course after massive criticism and legal pressure. Critics, including Elon Musk, AI pioneer Geoffrey Hinton, and former OpenAI employees, argued that the change would be a breach of the company's legal responsibilities.

The attorneys general in Delaware and California have also become involved in the case, which has apparently forced OpenAI to the negotiating table.

OpenAI has recently been valued at a staggering $300 billion. Changes to the corporate structure are, in other words, enormously consequential.

What this will mean in practice is hard to say. Critics still believe this is an attempt to weaken the control of OpenAI's non-profit arm, and that we haven't seen the last of Sam Altman on this front.

If you want to read more about OpenAI's highly confusing corporate structure, Vox journalist Kelsey Piper has written two excellent articles about it, which you can read here and here.

Did you miss this?

AI development is racing ahead and it's hard to cover everything in the newsletter. So here we've summarised some of the most important stories:

- A new NATO report lists AI and quantum technology as the second-largest macro-trend that will affect global security over the next 20 years.

- OpenAI had to roll back an update to GPT-4o after the model became too sycophantic with users, demonstrating how difficult "alignment" is.

- Nvidia plans to launch a downgraded version of its H20 AI chip so they can circumvent American export restrictions and sell the chips to China.

- Celebrity investor Paul Tudor Jones warns of an AI catastrophe after attending a Chatham House rules event with several top industry leaders (the 6-minute interview is well worth watching).

- Language models are better at persuading humans than humans are, according to this research paper.

- Will humanity gradually become obsolete because of AI? Professor David Duvenaud writes compellingly about what happens if machines can surpass us not only in work-related areas, but in all the areas that make us human.

This newsletter is part of the Langsikt (the author's newsletter) newsletter. Sign up to read more from Langsikt here.