The AI Horizon: March

OpenAI sets the internet on fire, Google launches the world's most powerful AI model yet, and new research shows that AI models' ability to perform tasks doubles every seven months. Here are some of the most important AI stories from March.

This newsletter is part of the Langsikt (the author's newsletter) newsletter. Sign up to read more from Langsikt here.

OpenAI sets the internet on fire (again)

- OpenAI has launched a new image generation service for Sora that allows virtually anyone to create virtually anything.

- It's becoming harder to tell real images from fake ones. Counting fingers or teeth to check whether something is AI-generated no longer works.

- Sora can also produce legible text instead of the meaningless letter soup AI used to generate.

- OpenAI is challenging copyright law. It will be interesting to see who wins.

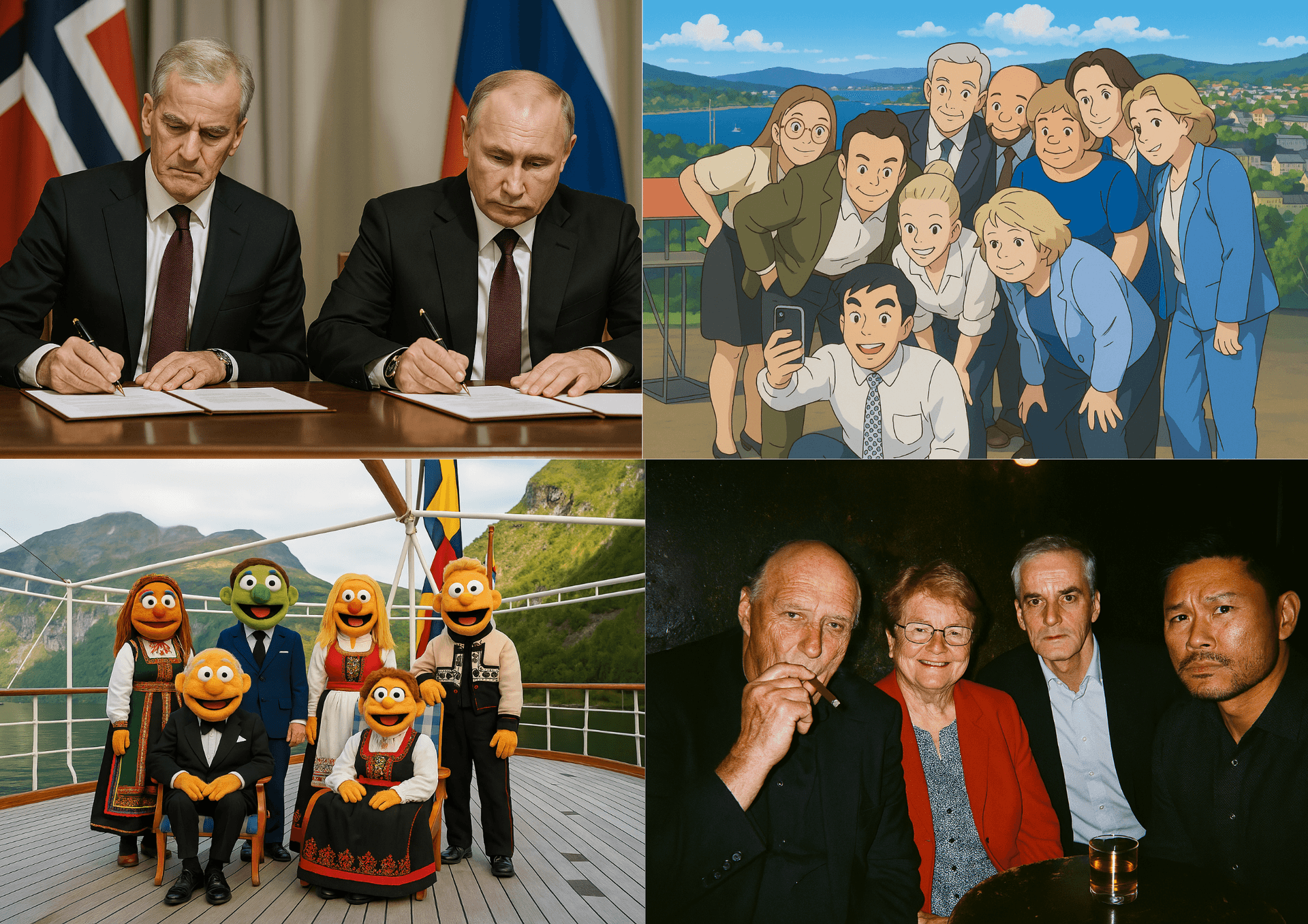

OpenAI has launched a new image generation service for Sora that allows virtually anyone to create virtually anything. With a simple prompt, ChatGPT users can turn the Norwegian royal family into Muppets, recreate a boozy evening between heads of state at a bar, or generate ultra-realistic press photos of a meeting between Putin and Støre (Norway's Prime Minister) that never took place.

Behind the images lies a new technique for image generation, and the quality and accuracy of the models have improved considerably. For example, it can now generate meaningful text instead of meaningless word salad, which has long been a major challenge for AI. In addition, tests like counting fingers or teeth have become useless. New markers are now needed to determine whether something is AI-generated or not.

So what are the consequences of all this? I think we can expect the internet to be flooded with even more AI-generated content, whether it's fun family photos, fake news images, or outright slop. In terms of commercial value, this and future AI models will have a major impact on advertising and marketing agencies. In other words, it's a bad week for photographers, models, and graphic designers.

Hvordan vil "the Mouse" reagere? | Sora

Does OpenAI not care about copyright? In theory, Sora is supposed to refuse requests to copy the style of living artists, but this can be circumvented very easily (for example, there were no error messages or problems when I asked it to create an image of Støre (Norway's PM) as Mickey Mouse). When AI companies dare to launch something like this, it's likely because they feel confident they'll get away with it. The Trump administration has made it clear that they will not create regulations that hinder AI development. The law could still become an existential threat to AI companies that all train on datasets full of copyrighted material, but for now it seems the fight is over.

New research: AI models' ability to perform tasks doubles every seven months

- New research shows that AI models' ability to solve lengthy tasks doubles every seven months.

- If the trend continues, we will see AI models capable of performing an entire day's work by 2026-27, and month-long projects by the end of 2030.

- METR's research helps explain the paradox of why AI models can outperform humans on complex expert tests yet struggle with simple tasks.

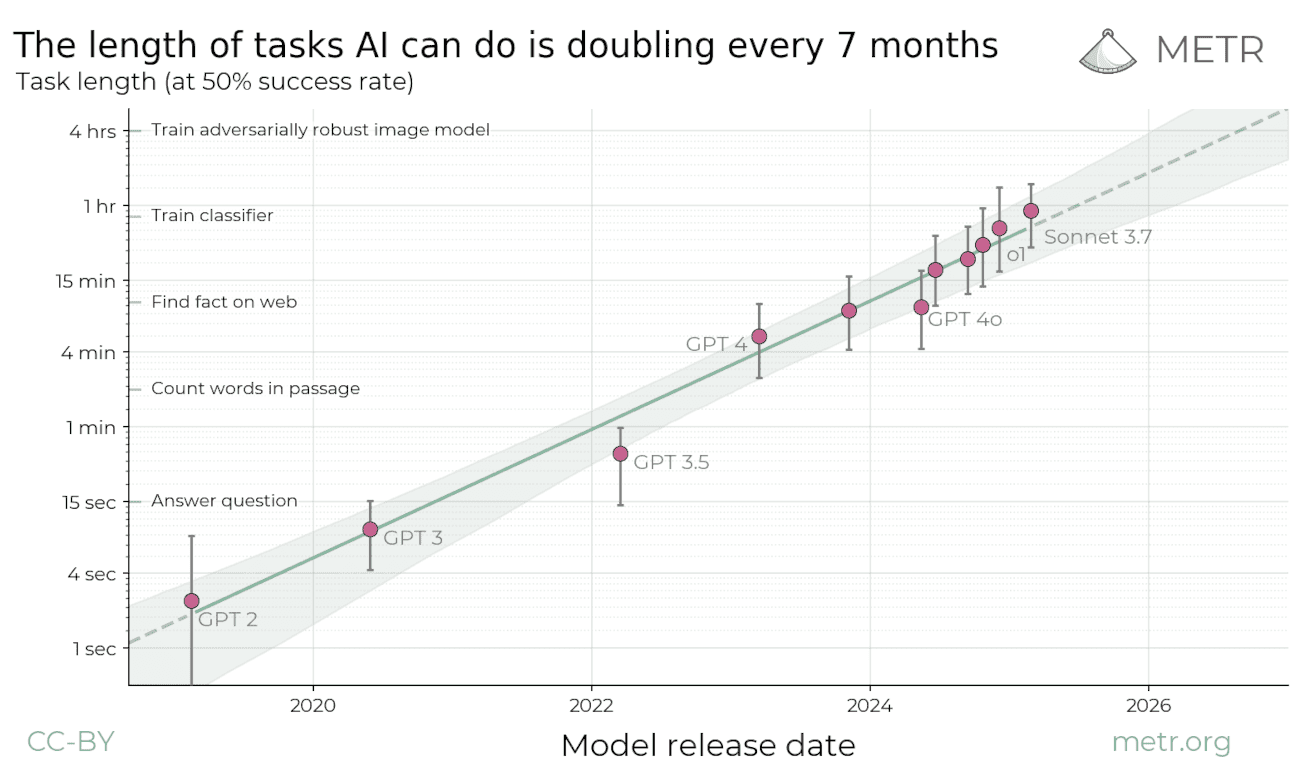

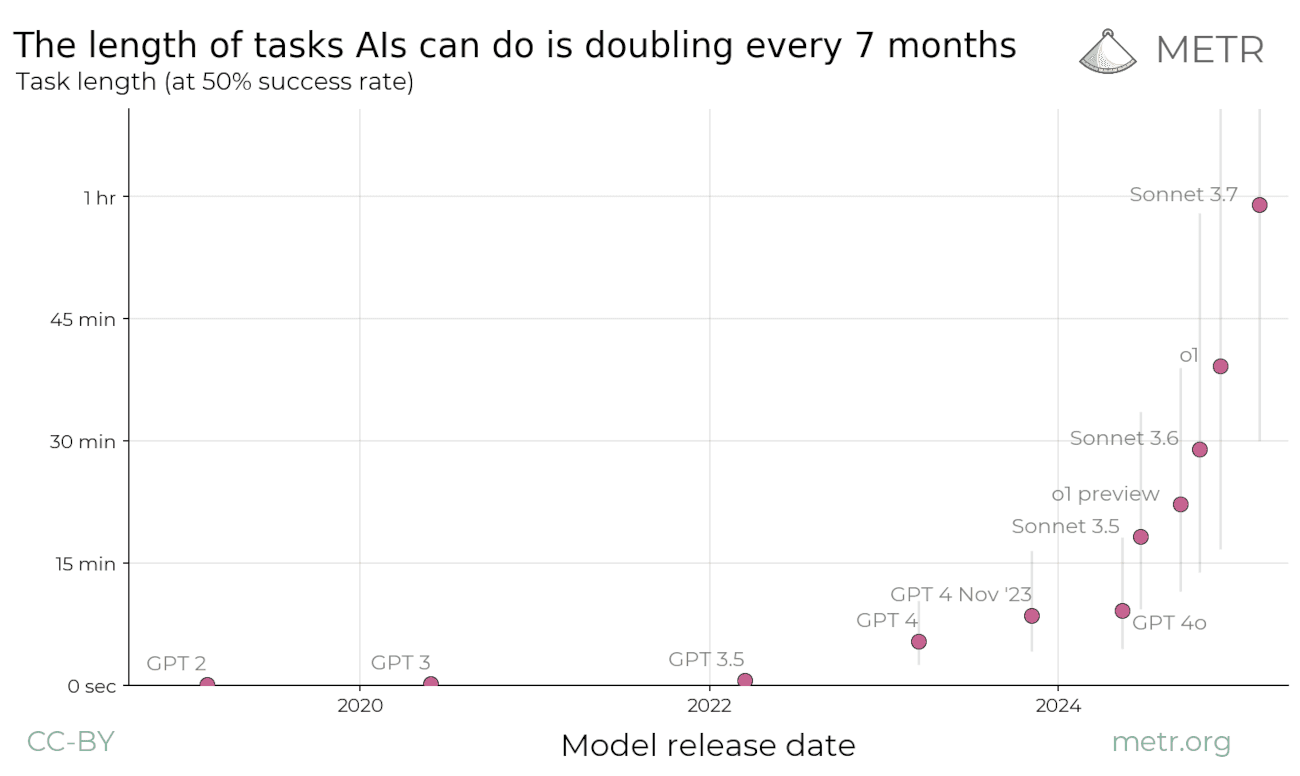

The research centre METR has investigated how long tasks different AI models can solve without making errors. Task length is measured against how long a human expert would take to complete the same task — for example, the time it takes to answer a question or count words in a paragraph.

METR looks all the way back to GPT-2 in 2019, which could only solve tasks that took less than three seconds. Since then, models have only grown more powerful, and Anthropic's latest model can now solve tasks that take an hour. Note that the graph above is logarithmic (i.e. goes from 1 sec, to 4 sec, to 15 sec, etc.). If you look at the linear version, you can see more clearly that the growth is exponential.

METR's research explains an important paradox: Today's AI models can outperform humans on complex knowledge tests, yet struggle to assist with ordinary work. The reason is that most practical tasks require multiple consecutive steps that build on each other. Even though AI is fantastic at individual tasks, it often loses the thread in longer sequences.

Up until now, the length of tasks models can solve has approximately doubled every seven months. How will this develop going forward? If the trend continues, we will see AI models capable of performing an entire day's work by 2026, and month-long projects by the end of 2030.

This could have major consequences for our society in terms of research and economics. At the same time, the trend could become even steeper or flatten out. METR's research only deals with historical data. We do not know what the future holds.

It should be emphasised that the research has a number of limitations and assumptions that one may disagree with. For example, the tasks they measure differ significantly from tasks one would encounter in the real world. If you want to read more, I recommend checking out Shakeel Hashim's analysis at Transformer or reading METR's own paper.

The AI debate goes mainstream

For a long time, the idea that we could one day achieve artificial general intelligence (AGI) — AI that is as smart as or smarter than humans in all domains — was regarded as science fiction by most people. This is beginning to change, with a growing number of researchers, business leaders, and others arguing that creating AGI is possible.

Most recently, Ben Buchanan, the White House's top AI adviser, appeared on Ezra Klein's podcast at the New York Times. Both Buchanan and Klein believe we could achieve AGI during Trump's presidential term. Veteran tech journalist Kevin Roose at the NYT shares the same view. He writes:

“I believe that most people and institutions are totally unprepared for the A.I. systems that exist today, let alone more powerful ones, and that there is no realistic plan at any level of government to mitigate the risks or capture the benefits of these systems.

I believe that hardened A.I. skeptics — who insist that the progress is all smoke and mirrors, and who dismiss A.G.I. as a delusional fantasy — not only are wrong on the merits, but are giving people a false sense of security.

I believe that the right time to start preparing for A.G.I. is now.”

It is quite remarkable that both Klein and Roose are writing about AGI in the world's largest newspaper. When AGI is considered a real possibility by politicians and journalists globally, we can expect major shifts in the AI debate. In Norway, there is still little discussion of AGI, although the Norwegian Board of Technology (Teknologirådet) mentions it as a possibility in their Tech Trends Report to the Norwegian Parliament 2025.

Google launches the most powerful AI model yet

A piece of news that has been completely drowned out by everything else is that Google has launched what several call the world's most powerful AI model to date. Gemini 2.5 Pro stands out by being able to process up to one million tokens (and soon two million) in a single prompt. That's enough to feed the entire Lord of the Rings trilogy into the model before starting the conversation. On top of that, it performs best in class on demanding reasoning and coding tasks and tops several tests such as 'Humanity's Last Exam' and 'Google-Proof Q&A'.

At the same time, Google is being criticised for a lack of transparency around the model's safety testing. In Transformer Weekly it is pointed out that Google has promised to publish a 'system card' for all powerful new AI models, but has not done so for Gemini 2.5 Pro — unlike the practice at both OpenAI and Anthropic.

Google has previously committed to greater transparency, including through the White House initiative in July 2023 and the Seoul Declaration, but no documentation has been published so far. It therefore remains unclear whether or how thoroughly the model has actually been tested for potential misuse and safety risks.

This newsletter is part of the Langsikt (the author's newsletter) newsletter. Sign up to read more from Langsikt here.