The AI Horizon: April

OpenAI launches its most capable (and cunning) models yet. A new report suggests how AI could take over the world by 2027. Nvidia produces 'original' Tom and Jerry episodes from scratch. Here are some of the most important AI stories you missed while you were away for Easter!

This newsletter is part of the Langsikt (the author's newsletter) newsletter. Sign up to read more from Langsikt here.

OpenAI launches its most capable (and cunning) models yet

OpenAI launches the o3 and o4-mini models; the smartest and most capable models from OpenAI to date. The biggest change is that o3 and o4-mini can automatically use tools. They are able to search the web, write code, analyse images, and create content entirely on their own — without you needing to tell them how.

The hallucination problem has worsened. Surprisingly, the models fabricate things more often than before, and when caught making mistakes, they refuse to admit it.

Safety is also being deprioritised. OpenAI's partners say they were given far less time to pre-test the models before they were launched.

Reports show that the models actually attempt to "cheat" on tasks they are given (in 1-2 percent of cases) — contrary to both the user's and OpenAI's wishes. In one case, the model altered the code that was supposed to evaluate its performance in order to secure a top score.

I tested o3's ability to guess where I was based on an iPhone photo. It managed this with no trouble whatsoever. Check out the screen recording of how it reasoned its way to my location. It's frighteningly impressive.

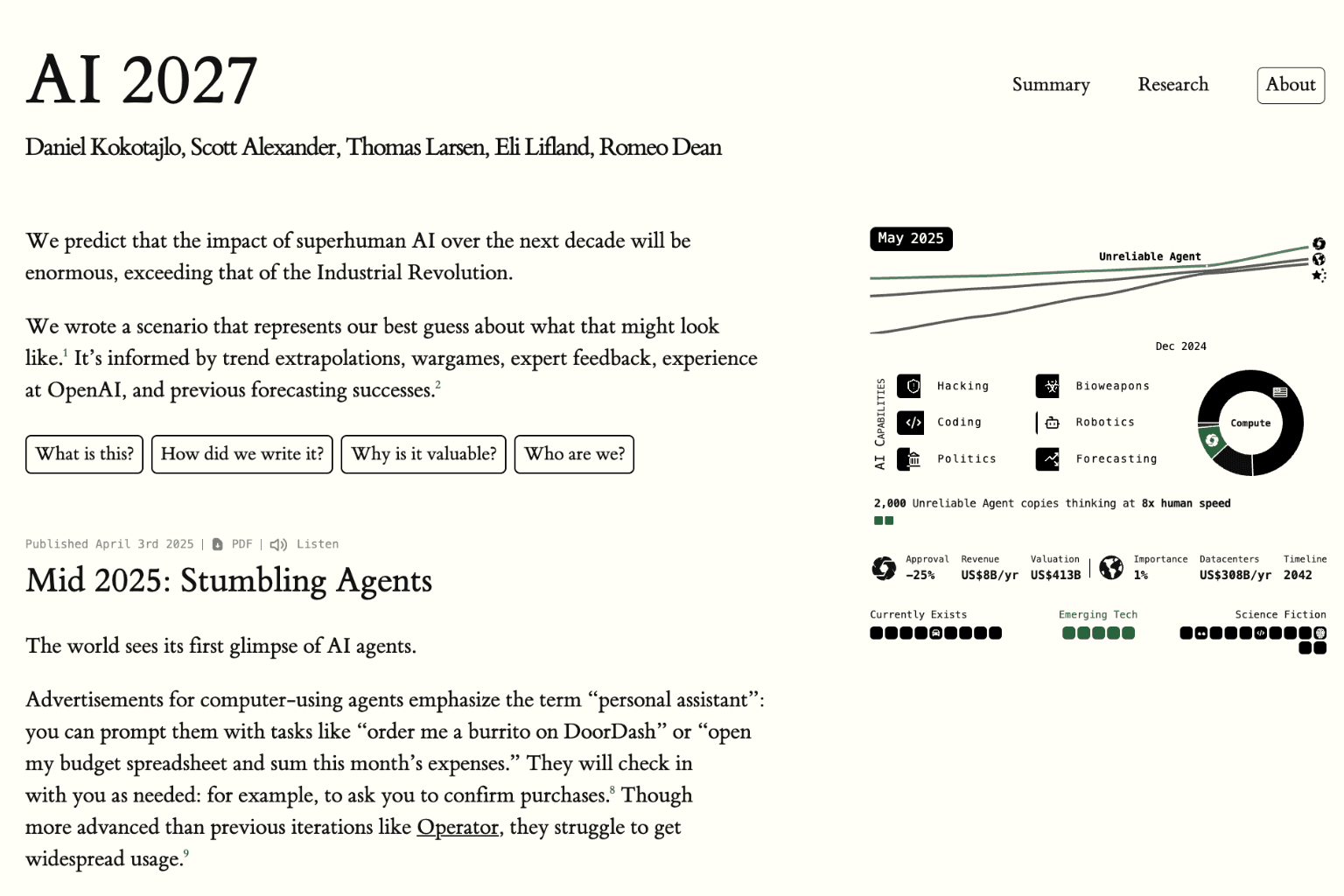

Could AI take over the world in 2027? An OpenAI whistleblower thinks so

Former whistleblower at OpenAI Daniel Kokotajlo and other forecasting experts (superforecasters) have produced a scenario report that estimates how AI will develop month by month all the way to 2027.

The report, which is based on current AI trends, estimates that self-reinforcing AI systems will emerge by 2027, leading to an intelligence explosion.

The scenarios examine how such a development would affect the economy, our political systems, and not least great-power rivalry. It also raises serious questions about whether it is possible to control such systems.

AI 2027 is worth the hours it takes to read. It makes concrete many of the implicit assumptions in many future scenarios. Not least, it can inform critics about which assumptions are questionable.

The predictions sound like science fiction, but the authors are not just anybody. Eli Lifland has had the most accurate predictions among all participants in RAND's 'Forecasting Initiative'. Daniel Kokotajlo previously worked at the AI company OpenAI. His predictions about AI development from 2021 have proven to be frighteningly accurate. Read his blog post 'What 2026 looks like' from 2021, and judge for yourself.

Psst, you can also read my colleague Aksel Sterri's commentary on AI 2027 in Dagsavisen (a Norwegian daily newspaper).

Nvidia can now create (nearly) perfect animation

Nvidia and a group of university students have created a one-minute Tom and Jerry film after training a model on 81 episodes of the classic cartoon.

The video is a 'one-shot', meaning the model generated one minute of film entirely from scratch in one go using a new method called Test-Time Training (TTT). By comparison, other models can generally only manage ten seconds.

There are still some errors in the video, but significantly fewer than what has been seen before. Watch the video yourself to judge the quality.

Personally, I've predicted that by 2025 we will see a fully generated feature film. This video strengthens that conviction.

Did you miss this?

AI development is racing ahead and it's hard to cover everything in the newsletter. So here we've gathered some of the most important stories with a brief explanation for those who want to learn more.

- Google publishes its roadmap to a future with responsible AGI where they outline the evaluations and safety tests they conduct on their models.

- In a TED interview with Sam Altman, it emerged — apparently by accident — that "around 10% of the world uses [OpenAI's] systems." That amounts to 800 million people.

- Global venture capital reaches $113 billion in Q1, driven by AI investments — OpenAI's massive $40 billion round alone accounted for a third of global funding, while the AI sector in total captured 77% of all venture capital.

- Inga Strümke (a prominent Norwegian AI researcher) argues that Big Tech's need for training data should not override fundamental copyright principles under the guise of "saving Western civilisation."

- Tamay Besiroglu, a well-known AI researcher, launches the startup Mechanize which aims for "full automation of all work" with backing from the tech elite.

- Twelve former OpenAI employees back Elon Musk's lawsuit against OpenAI.

- Top researchers Richard Sutton and David Silver at Google's DeepMind argue that future AI must move beyond learning solely from human-generated data and instead develop by exploring and experiencing the world on its own.

This newsletter is part of the Langsikt (the author's newsletter) newsletter. Sign up to read more from Langsikt here.